Generation and Mitigation of Hazardous Scenarios at Traffic Intersections

ABOUT THE PROJECT

At a glance

Safety remains a major hurdle to the wider adoption of autonomous vehicle (AV) technology. A safety report published by Waymo in October of last year [1] has become a virtual roadmap for automated vehicle developers. This report declares that Waymo racked up “over 3.5 million miles of real-world experience”. At the same time, the 2016 consumer study by MIT AgeLab and the New England Motor Press Association [2], shows that of roughly 3,000 people asked about their interest in self-driving cars, nearly half – 48 percent – said they would never purchase a car that completely drives itself. Respondents said they were uncomfortable with the loss of control, do not trust the technology, and do not feel that self-driving cars are safe. One perspective is that the 3.5+ million miles of real-world driving accumulated by the industry leader is but a drop in the bucket of over 18 trillion miles driven by Americans during the same period. A common response to this challenge is simulation: E.g., from Waymo [1]: “In simulation, we rigorously test any changes or updates to our software before they’re deployed in our fleet. We identify the most challenging situations our vehicles have encountered on public roads, and turn them into virtual scenarios for our self-driving software to practice in simulation.” In 2016, Waymo simulated 2.5 billion self-driving miles, and in 2017 it increased its simulation productivity by 25%. The problem: do these simulations capture sufficiently many interesting road and traffic scenarios, including corner-case scenarios that could lead to unsafe situations?

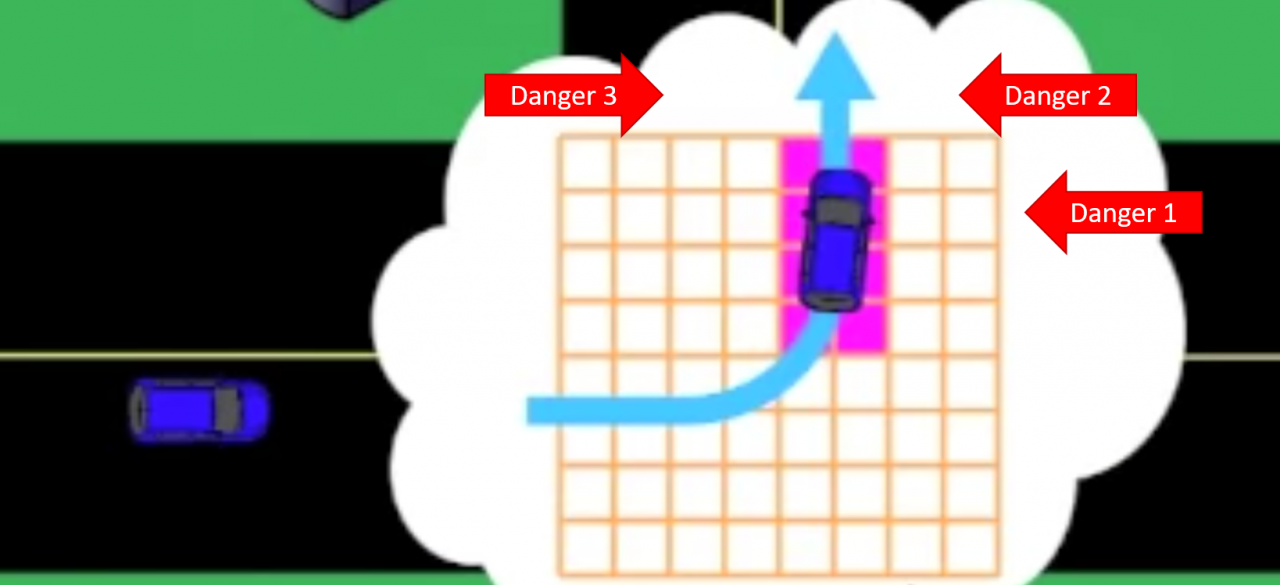

A particularly challenging set of scenarios arise in traffic intersections, especially in urban environments. An intersection is a well-defined, yet arguably the most complex, unit of a traffic network infrastructure. The complexity comes from simultaneous interactions between agents of different classes – vehicles, bicyclists, pedestrians; possible occlusions; and various types of violations. Establishing safety of an AV in such an environment is very challenging. Some attempts have been made to establish a mathematical model that absolves a self-driving car from blame for an incident, “as long as it follows a predetermined set of clear rules for fault in advance” [3]. However, this approach has a major shortcoming: the car following the rules may still get into an avoidable accident caused by someone else, just as in the case with the infamous Uber crash in Tempe, AZ in March 2017 [4]. To avoid such scenarios, it is desirable for the autonomous vehicle to cooperate with other agents and with intelligent traffic infrastructure to avoid unsafe situations. The problem: how to develop such a cooperative approach between the AV and intelligent traffic infrastructure?

The goal of our project is to create a simulation-driven methodology for automatic generation of hazardous scenarios at traffic intersections, and use the results to design information sharing and cooperation between the autonomous vehicle and infrastructure. Achieving this goal will address the stated problems (1) by focusing the simulation effort on the most safety-critical situations, and (2) through systematic design of intelligent intersections that cooperate with autonomous driving systems.

References

- Waymo. On the Road to Fully Self-Driving. Safety Report: https://waymo.com/safetyreport

- H. Abraham, B. Reimer, B. Seppelt, C. Fitzgerald, B. Mehler, J.F. Coughlin. Consumer Interest in Automation: Preliminary Observations Exploring a Year’s Change. Whitepaper, MIT AgeLab, February 2017. http://agelab.mit.edu/sites/default/files/MIT%20-%20NEMPA%20White%20Paper%20FINAL.pdf

- S. Shalev-Shwartz, S. Shammah, A. Shashua. On a Formal Model of Safe and Scalable Self-Driving Cars. Mobileye, 2017. https://arxiv.org/abs/1708.06374

- D. Muoio. Police: The Self-Driving Uber in the Arizona Crash Was Hit Crossing an Intersection on Yellow. Business Insider. March 2017. http://www.businessinsider.com/uber-self-driving-car-accident-arizona-police-report-2017-3

| principal investigators | researchers | themes |

|---|---|---|

| Sanjit A. Seshia and Pravin Varaiya | intelligent traffic intersections, control, simulation, verification, hazard analysis |