Measuring Prediction Trustworthiness and Safety Through Neural Network Loss Landscape Analysis

ABOUT THE PROJECT

At a glance

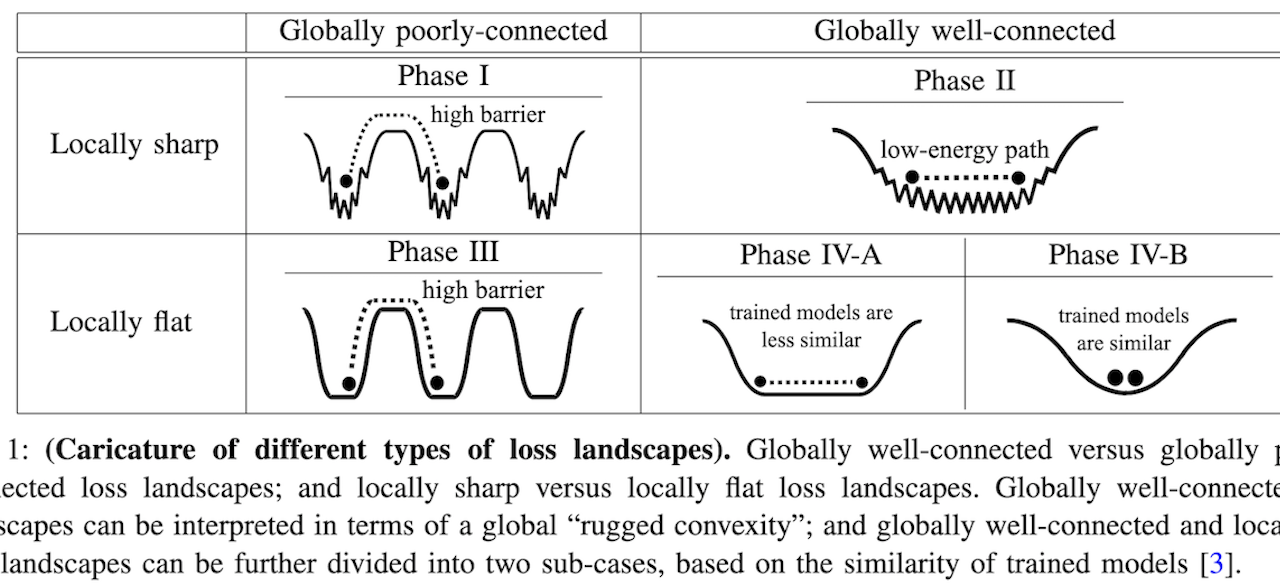

Deep neural networks are increasingly being deployed in safety critical components, and quantifying their trustworthiness and safety is an on-going challenge. Metrics such as testing accuracy alone are not indicative of whether a model is safe or not, as the model may not be robust to out-of-domain and/or adversarially perturbed data. Recent empirical studies have shown that metrics obtained by measuring the “loss landscapes” are the most accurate for predicting generalization among over forty existing generalization metrics [1, 2]. In this proposal, we identify several inefficiencies of prior metrics used to quantify model robustness, with a focus on analyzing safety through loss landscape of the model. We plan to perform a multi-faceted study and pursue the following research thrusts: (i) Study “global” structural properties of loss landscapes instead of local properties; (ii) Study gener- alization metrics that can be calculated without accessing any training or test data; and (iii) Develop metrics that can characterize the quality of learning models directly, instead of the generalization gap (test loss subtracted by training loss) often studied in the literature. Furthermore, we also plan to study how these safety metrics, calculated from loss landscapes, can improve model robustness, especially from the following two aspects: (i) Use the loss landscape metrics to robustly quantify generalization when various environmental factors change and/or the data/model is adversarially perturbed; and (ii) Couple this metric in a Neural Architecture Search framework to adapt the model architecture and make it more robust.

| principal investigators | researchers | themes |

|---|---|---|

Trustworthiness and Safety, Deep Learning Generalization Metrics, Loss Landscapes, Robustness |

Continuing work will be found under the project Improving OOD Generalization Through Metric-informed Weight-space Augmentation and Architecture Search.