Modeling and Learning Multi-Agent Behaviors for Simulation and Analysis of Autonomous Driving Scenarios

ABOUT THE PROJECT

At a glance

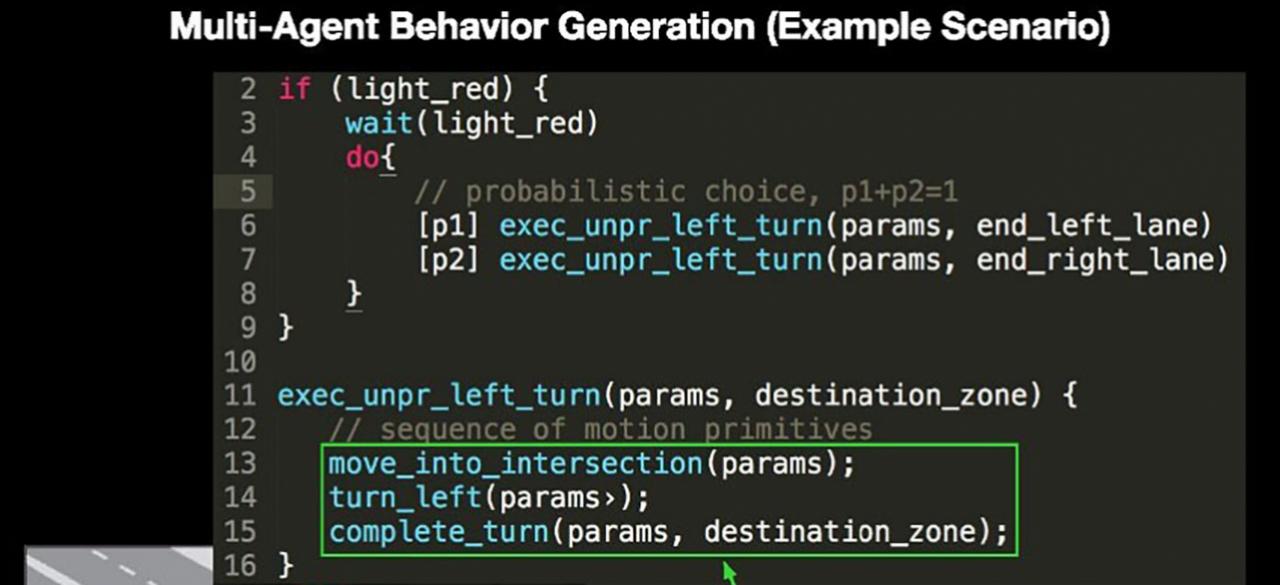

A major challenge facing developers of autonomous driving technology is to model the environment of the autonomous vehicle. Specifically, there is a need for systematic, algorithmic techniques for generating behaviors of traffic participants (e.g. cars, pedestrian, cyclists, etc.) in simulation and for the design of autonomous vehicles (AVs). Current practice involves engineers handcoding each scenario and fuzzing trajectories of different agents on the road for testing. This is neither a scalable nor exhaustive approach to model and explore myriad scenarios. A central question is: How can we develop generative models of multi-agent behaviors, including those for humans, and formalize a language to modularly construct these models for simulation, design, and testing of AVs? A few recent works propose generative models for multi-agent behaviors. However, these focus on mimicking agents, not on creating realistic new behaviors that are different from demonstrations. Also, some of these works tend to focus on learning tactics as policies by relying on deep neural networks (NN). Consequently, difficulties in interpreting and controlling these NNs, again, makes new behavior creation a challenge. Other techniques for learning human agent models based on techniques such as inverse reinforcement learning can be effective to learn models for specific situations (e.g. lanechanges); however, they do not scale well for learning multi-agent models or for complex scenarios involving a composition of multiple maneuvers. In our project, we propose an approach to algorithmically generate interpretable multi-agent models in a modular, reusable, and efficient manner.

| PRINCIPAL INVESTIGATORS | RESEARCHERS | THEMES |

|---|---|---|

| Alberto Sangiovanni-Vincentelli Sanjit Seshia | environment modeling, multi-agent behaviors, compositional modeling, simulation, verification, program learning, behavior generation, generative models |