Off-Policy Reinforcement Learning for Learning from Logged Data

ABOUT THIS PROJECT

At a glance

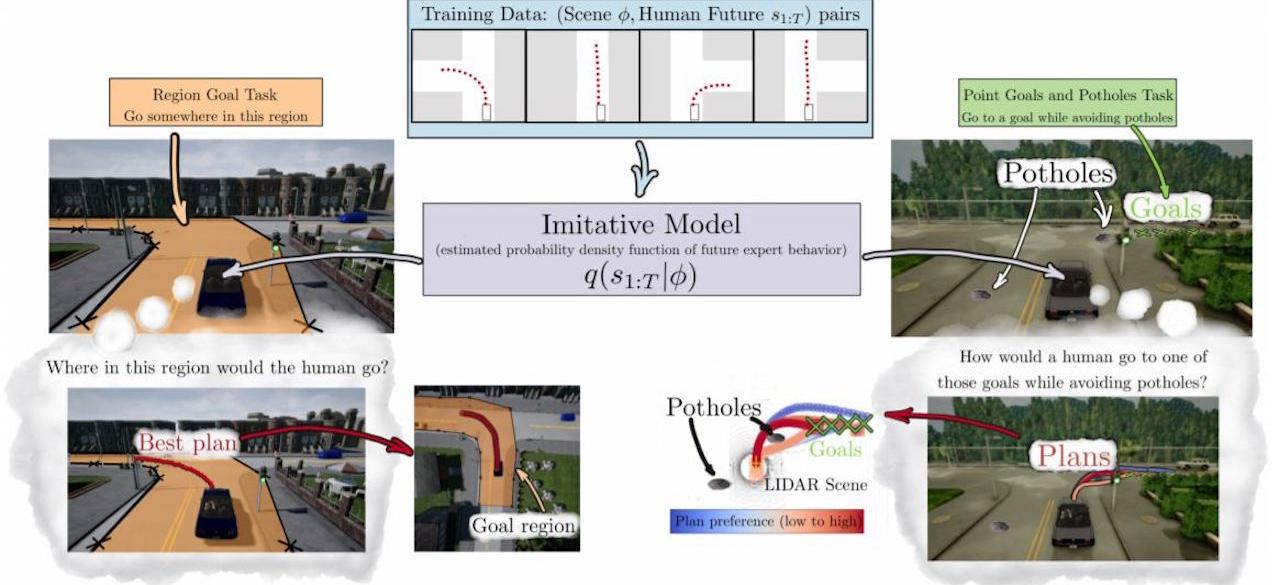

Reinforcement learning has emerged as a highly promising technology for automating the design of control policies. Deep reinforcement learning in particular holds the promise of removing the need for manual design of perception and control pipelines, learning the entire policy -- from raw observations to controls -- end to end. However, reinforcement learning is conventionally regarded as an online, active learning paradigm: a reinforcement learning agent actively interacts with the world to collect data, uses this data to update its behavior, and then collects more data. While this is feasible in simulation, real-world autonomous driving applications are not conducive to this type of learning process: a partially trained policy cannot simply be deployed on a real vehicle to collect more data, as this would likely result in a catastrophic failure. This leaves us with two unenviable options: rely entirely on simulated training, or manually design perception, control, and safety mechanisms. In this project, we will investigate a third option: fully off-policy reinforcement learning. In principle, dynamic programming methods, such as Q-learning, can operate entirely on previously logged data (e.g., logged driving data from human drivers), without any additional online data collection. In practice, such methods tend to struggle when they are not permitted to collect any additional data. We will identify the reasons for this, and design a new generation of fully off-policy reinforcement learning algorithms that can utilize large logged datasets to train end-to-end policies for autonomous control, for application domains such as autonomous driving.

| principal investigators | researchers | themes |

|---|---|---|

| Sergey Levine | off-policy reinforcement learning, data-driven control, Q-learning, uncertainty |